¯\_(ツ)_/¯

Major changes impossible?

Guessing at sprint planning?

Late deliverables & stalled projects?

Plan with CodeLogic.

Guessing at sprint planning?

Late deliverables & stalled projects?

Plan with CodeLogic.

(┛ಠ_ಠ)┛彡┻━┻

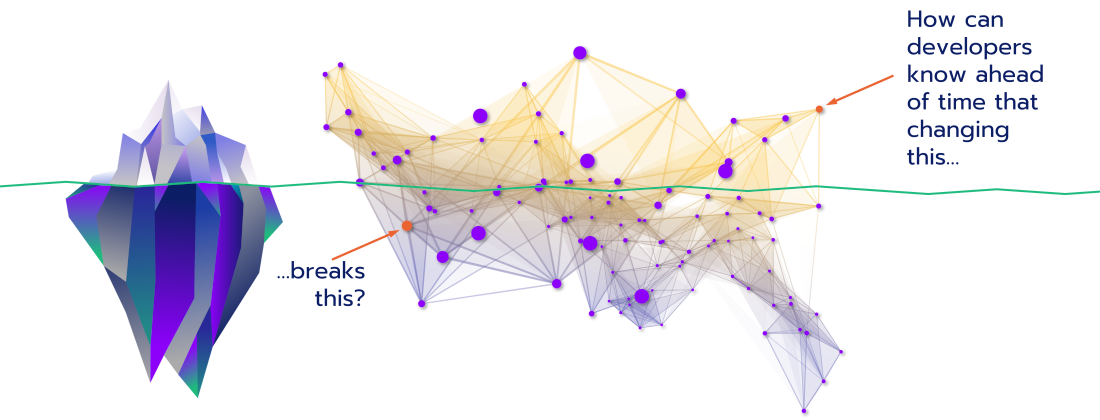

Worried about breaking old code?

Are your functional tests always lagging?

Do you fear Friday night deployments?

Know before with CodeLogic.

Are your functional tests always lagging?

Do you fear Friday night deployments?

Know before with CodeLogic.

ಥ╭╮ಥ

Can you see the what, but not the why?

Ever expanding complexity keep you up?

Do things change for “no reason”?

Get clarity with CodeLogic.

Ever expanding complexity keep you up?

Do things change for “no reason”?

Get clarity with CodeLogic.

Previous slide

Next slide

¯\_(ツ)_/¯

Major changes impossible?

Guessing at sprint planning?

Late deliverables & stalled projects?

Plan with CodeLogic.

Guessing at sprint planning?

Late deliverables & stalled projects?

Plan with CodeLogic.

(┛ಠ_ಠ)┛彡┻━┻

Worried about breaking old code?

Are your functional tests always lagging?

Do you fear Friday night deployments?

Know before with CodeLogic.

Are your functional tests always lagging?

Do you fear Friday night deployments?

Know before with CodeLogic.

ಥ╭╮ಥ

Can you see the what, but not the why?

Ever expanding complexity keep you up?

Do things change for “no reason”?

Get clarity with CodeLogic.

Ever expanding complexity keep you up?

Do things change for “no reason”?

Get clarity with CodeLogic.

Previous slide

Next slide

<Code Fearlessly/>

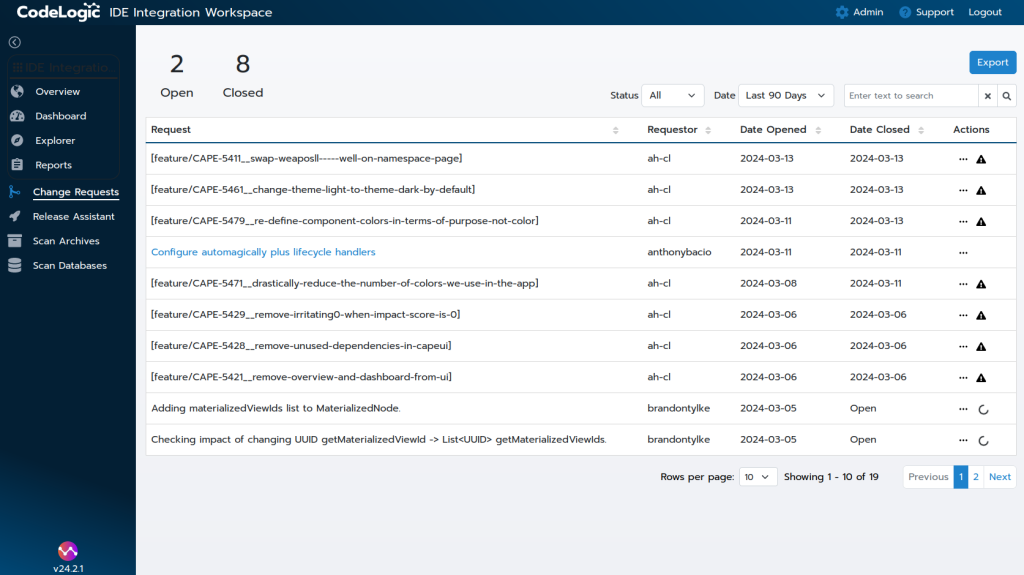

Planning

- Use CodeLogic to plan major changes

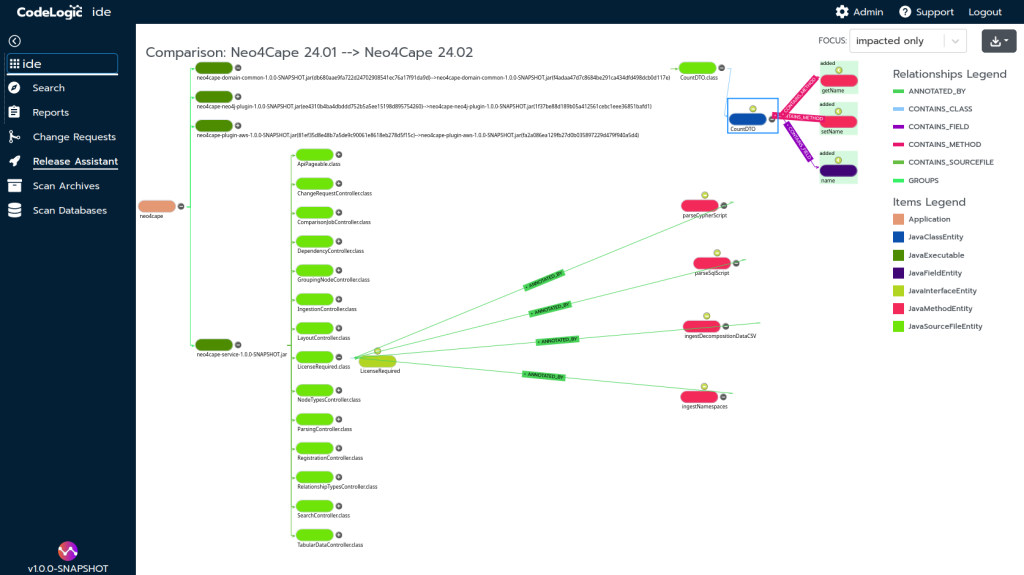

- See touchpoints and changes for version upgrades to libraries and frameworks

- Understand major impacts between component versions

- Accurately plan and scope tickets

- Quickly assess the breadth and depth of a component’s dependencies

- Detect and understand endpoints overlooked by other tools

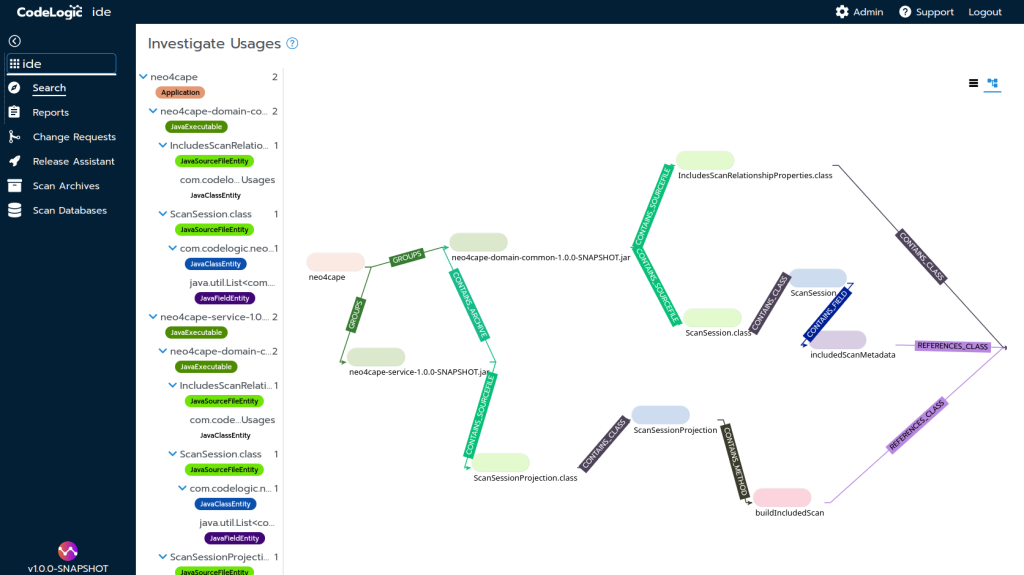

Development

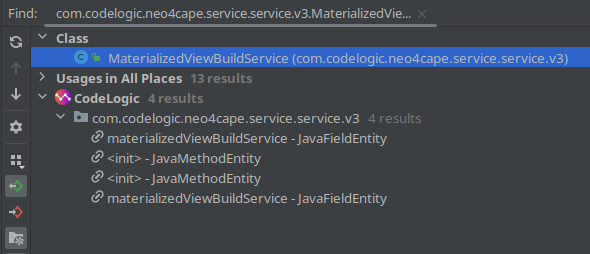

- Use CodeLogic to inspect impact during development

- View effects within projects and across API and project boundaries from the IDE

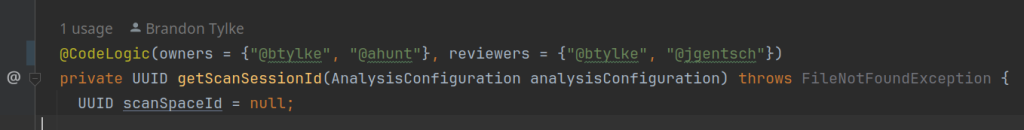

- Use annotations to protect critical sections of code

- Get notifications when sensitive code is impacted

Integration

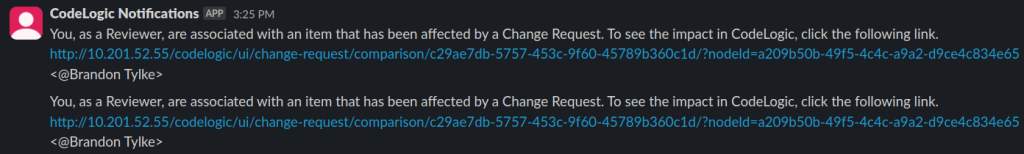

- Review differential impact of pull and merge requests

- Receive impact alerts on annotation hits via Slack

- Understand if something is about to be impacted by proposed changes

- Understand when tests are impacted by changes

Build

- Use CodeLogic to review post build issues

- Review recent changes to understand where a dependency impact originated

- Halt builds or deployments using CodeLogic API integrations

- Extend agent infrastructure with plugins

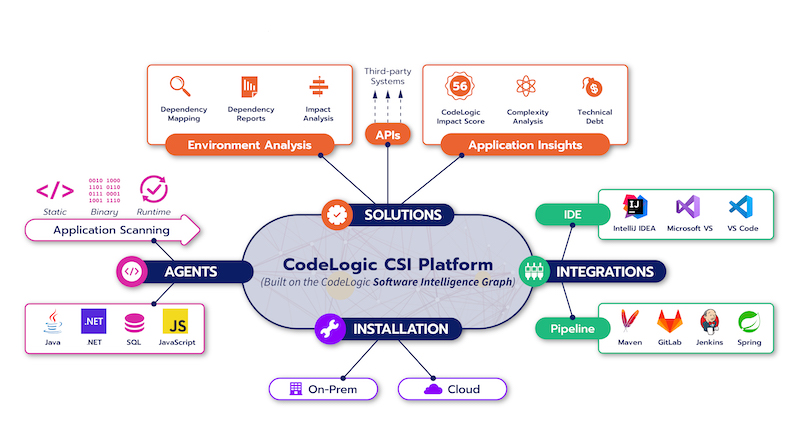

CodeLogic integrates with the most popular programming languages, databases, IDEs, and CI/CD platforms

Languages

- Java

- Javascript (including React, Angular, Node and other frameworks)

- C# and other .Net languages

Databases

- All SQL databases with JDBC support (including MySQL, Oracle, Postgres, and MS SQL Server)

- Stored procedure support for Oracle (PL/SQL), Postgres (PL/pgSQL), and SQL Server (Transact-SQL)

- Visual Studio

- VSCode

- IntelliJ Idea

Development pipeline integrations

- Maven

- Jenkins

- Gitlab

- Github

- Custom Docker image to integrate with Gradle and other tools

- Maven

- Jenkins

- Gitlab

- Github

- Custom Docker image to integrate with Gradle and other tools

“No other solution can store and reference relationships between various software elements

and traverse different languages, frameworks, data models and underlying infrastructures.”

Get started with CodeLogic today

Let our expert team guide you on your journey to coding fearlessly

Let our expert team guide you on your journey to coding fearlessly